Hi everyone, In this article, I will transfer a table on Mysql to the HDFS file system using sqoop.

Sqoop provides the ability to transfer from any RDBMS to an HDFS system.

Now let’s continue with the example, Let’s download a sample set from kaggle

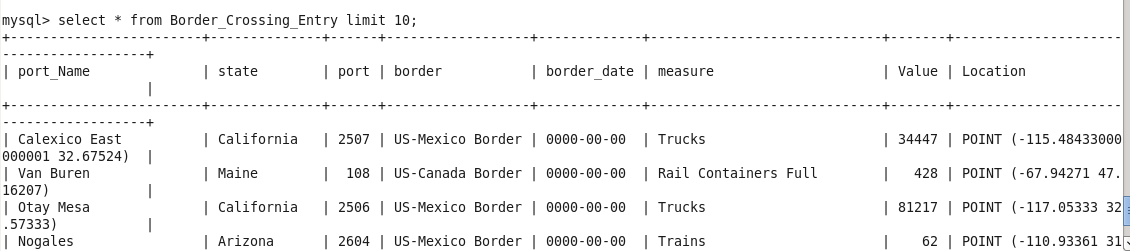

Move the csv file to the virtual machine through WinSCP or a similar program. connect to mysql and create a table that fits the data set.

create table Border_Crossing_Entry ( port_Name varchar(50), state varchar(50), port int, border varchar(50), border_date date, measure varchar(50), Value int, Location varchar(50));

This script transfers the data from our csv file to the table.

load data local infile '/home/cloudera/Border_Crossing_Entry_Data.csv' into table Border_Crossing_Entry fields terminated by ',' enclosed by '"' lines terminated by '\n' (port_Name,state,port,border,border_date,measure,Value,Location);

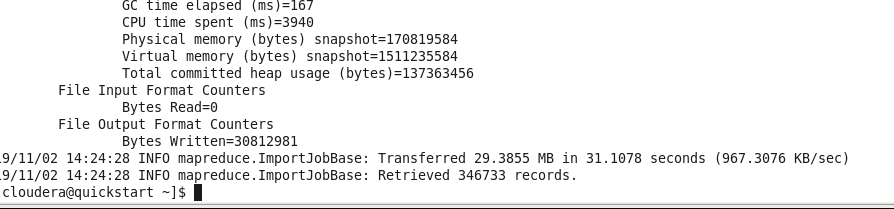

Now let’s create the target directory in HDFS.

hadoop fs -mkdir /user/cloudera/sqoop_exp For import, let’s set the sqoop parameters and start the operations.

sqoop import --connect jdbc:mysql://quickstart:3306/retail_db --username=retail_dba --password=cloudera --table Border_Crossing_Entry --m 1 --target-dir /user/cloudera/sqoop.exp

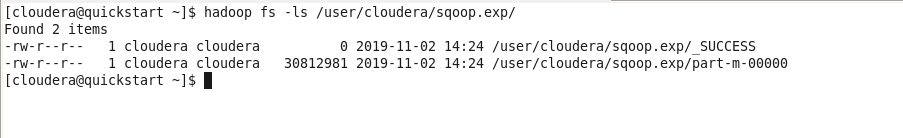

Finally, let’s take a look at the file we show as output to hdfs.

hadoop fs -ls /user/cloudera/sqoop.exp/

If you look inside the file with m-00000, you can see that the data set is there.

See you in the next article…

IT Tutorial IT Tutorial | Oracle DBA | SQL Server, Goldengate, Exadata, Big Data, Data ScienceTutorial

IT Tutorial IT Tutorial | Oracle DBA | SQL Server, Goldengate, Exadata, Big Data, Data ScienceTutorial